FROM THE TEAMiuvo Blog & News

Insights, ideas, and field notes from the iuvonauts — written for the people making IT decisions every day.

Filter By Topic

.png)

it consulting

Replacing Windows with Debian Linux

Jan 20, 2026 11:00:01 AM-1.png)

IT Infrastructure

Windows 10 End of Support: What Your Business Needs to Know

Jul 1, 2025 11:15:00 AM-1.png)

IT Infrastructure

Low Cost, and Safe DeepSeek-R1

Mar 4, 2025 11:00:00 AM.png)

Cloud

The Short-Term Future of Computer Virtualization with VMware

May 21, 2024 11:00:00 AM

IT Management

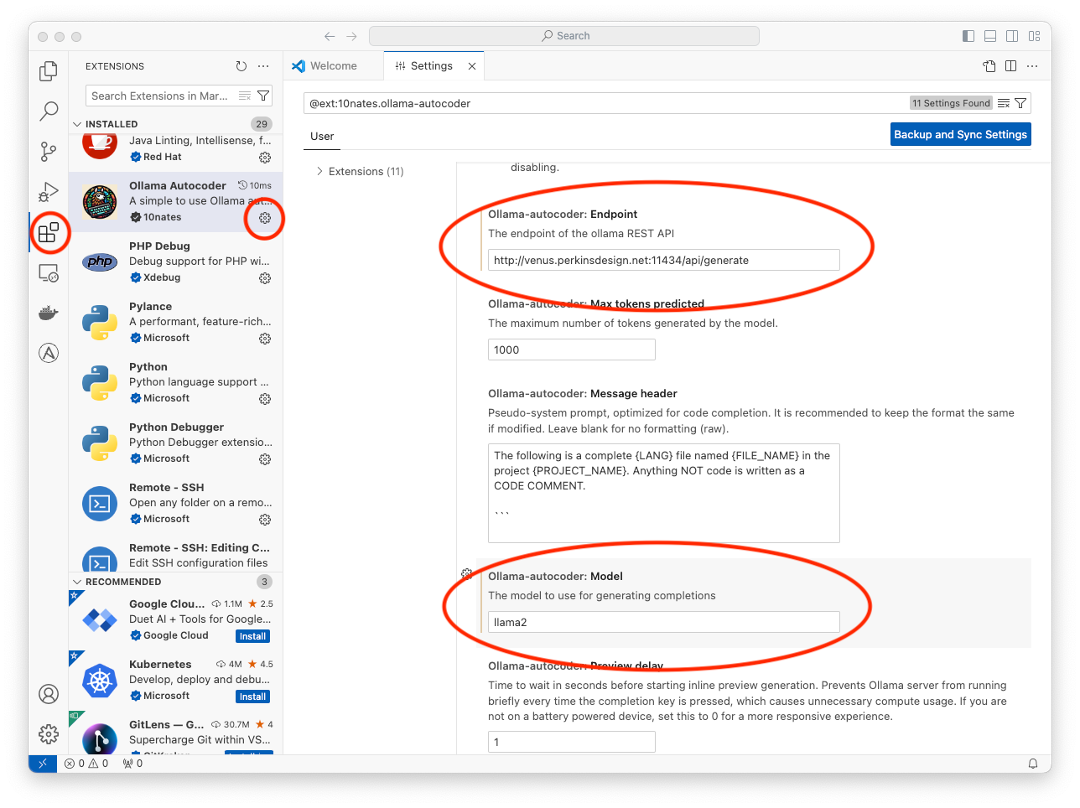

Local LLMs Part 3 – Linux

Apr 2, 2024 9:30:00 AM

IT Management

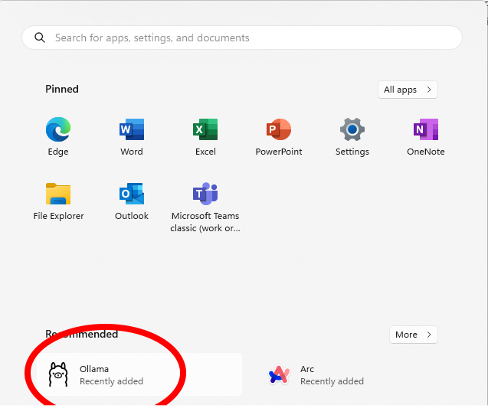

Local LLMs Part 2 – Microsoft Windows

Mar 20, 2024 12:48:12 PM.png)

IT Management

Local LLMs Part 1 – Apple MacOS

Feb 29, 2024 11:00:00 AM-1.png)

Cloud

Supporting On Premises Microsoft Exchange

Aug 8, 2023 11:00:00 AM

Cloud

Google Workspace vs. Microsoft 365: Which is Right for You?

Nov 1, 2022 9:30:00 AMNo posts on this page match your search.

Want More iuvo Insights?

Subscribe and get fresh thinking from our iuvonauts delivered to your inbox.

Subscribe to the Blog